1ms GTG vs 1ms MPRT

- Thread starter xiwos

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Solution

mprt is a more accurate measurement of motion blur.

gtg is Gray to Gray pixel transitions. But the measurement is typically from 10% transition to 90% transition. So actual gtg (0-100) will almost always be much higher than the indicted value.

mprt is Moving Picture Response Time

gtg is Gray to Gray pixel transitions. But the measurement is typically from 10% transition to 90% transition. So actual gtg (0-100) will almost always be much higher than the indicted value.

mprt is Moving Picture Response Time

bjornl

Honorable

mprt is a more accurate measurement of motion blur.

gtg is Gray to Gray pixel transitions. But the measurement is typically from 10% transition to 90% transition. So actual gtg (0-100) will almost always be much higher than the indicted value.

mprt is Moving Picture Response Time

gtg is Gray to Gray pixel transitions. But the measurement is typically from 10% transition to 90% transition. So actual gtg (0-100) will almost always be much higher than the indicted value.

mprt is Moving Picture Response Time

The other day i had seen hardware unbox (youtuber)'s review..he said that mprt cannot give u true 144hz..instead it will give u about 100hz on the aoc curved gaming monitor..so whome to believe now!..plz help..its vry confusing..and are va panel better then tn for gaming n light editing work?

Glenwing

Splendid

Just ignore response times, they are meaningless, like I said. And don't pay attention to the refresh rate stuff, it doesn't work like that.The other day i had seen hardware unbox (youtuber)'s review..he said that mprt cannot give u true 144hz..instead it will give u about 100hz on the aoc curved gaming monitor..so whome to believe now!..plz help..its vry confusing..and are va panel better then tn for gaming n light editing work?

mdrejhon

Distinguished

I did some research on why manufacturers were so inaccurate on GtG -- and recently figured out why we've stuck to an imperfect standard.

Please read below why the imperfection was a forced requirement.

We are not always happy about it, but we have to understand at least the "why".

I actually replied in a Linus Tech Tips forums on the same topic, so I'll crosspost my post here:

---

I recently investigated this quandry -- and as it turns out -- there's already an industry standard method that the manufacturers are using -- and found out the reasons why it's sometime inaccurate/subjective.

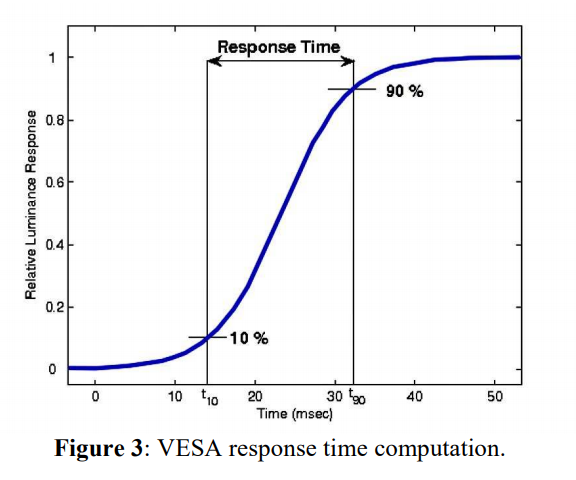

What the manufacturers are doing is the VESA method, which only measures from 10% to 90% GtG.

(Credit: scientific paper)

This actually misses a lot of the GtG from 0% to 100%.

But this was necessary in the past because electronic equipment needed to have automatic cutoff points to stop measuring. In many old screens, GtG never quite reached 0% or 100% because of noise margins in the measuring equipment, as well as noise margins in the display (e.g. noise/flicker in the pixels).

Also, many very old LCDs 33ms sometimes took several seconds (and erratically, due to noise margins) before GtG 100% properly triggered even though it was well below human noise floor. And, most of the human-visible GtG is through the middle region. With cutoff points, measuring numbers can be much more reliably compared between displays. A GtG 2ms display was obviously faster than a GtG 33ms display, as long as the GtG measuring technique was standardized -- even if the real GtG(100%) was a bit different. The 10% and 90% points are somewhat arbitrary and human-selected, but there were good reasons for the existence of the industry standardized cutoff points.

But that can also create a divergence between what is human-seen and what is measured. And manufacturers end up being blamed for inaccurate specs. But there's actually a method, reasons, and industry standard for GtG measurements.

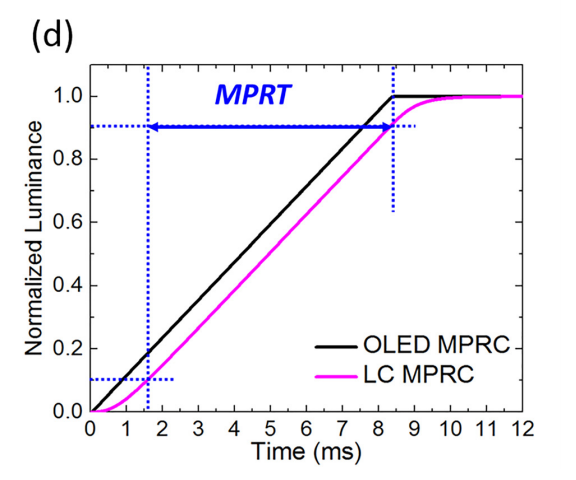

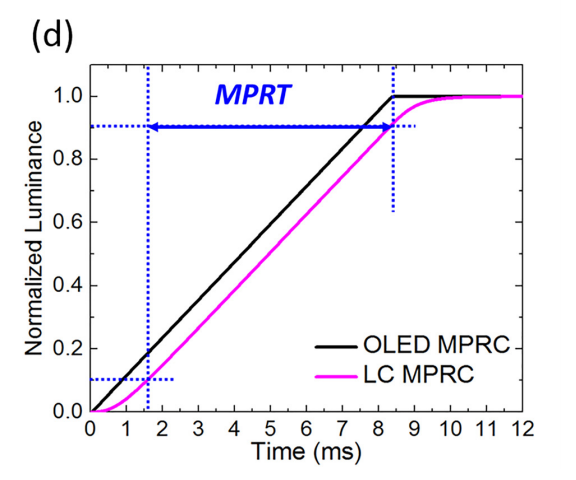

Now, GtG measurements well below a refresh cycle, tend to be hidden in the persistence (MPRT) motion blur. A 1ms GtG display can have 16.7ms MPRT.

GtG is the pixel response time while MPRT more represents the pixel visibility time. MPRT is more accurate in measuring display motion blur.

MPRT is measured differently, but has some similar "cutoff thresholds" (10% and 90%), as specified in this paper.

Also, recently, Blur Busters has written a new article: GtG versus MPRT -- the two different pixel response benchmarks. They are both standardized.

Hopefully this demystifies things.

Cheers,

---

They are not "flat out lying". They are "using an imperfect measurement standard".Manufacturers have no problem flat out lying about response times, so both of them are basically meaningless.

Please read below why the imperfection was a forced requirement.

We are not always happy about it, but we have to understand at least the "why".

This is correct.mprt is a more accurate measurement of motion blur.

gtg is Gray to Gray pixel transitions. But the measurement is typically from 10% transition to 90% transition. So actual gtg (0-100) will almost always be much higher than the indicted value.

mprt is Moving Picture Response Time

I actually replied in a Linus Tech Tips forums on the same topic, so I'll crosspost my post here:

---

I recently investigated this quandry -- and as it turns out -- there's already an industry standard method that the manufacturers are using -- and found out the reasons why it's sometime inaccurate/subjective.

What the manufacturers are doing is the VESA method, which only measures from 10% to 90% GtG.

(Credit: scientific paper)

This actually misses a lot of the GtG from 0% to 100%.

But this was necessary in the past because electronic equipment needed to have automatic cutoff points to stop measuring. In many old screens, GtG never quite reached 0% or 100% because of noise margins in the measuring equipment, as well as noise margins in the display (e.g. noise/flicker in the pixels).

Also, many very old LCDs 33ms sometimes took several seconds (and erratically, due to noise margins) before GtG 100% properly triggered even though it was well below human noise floor. And, most of the human-visible GtG is through the middle region. With cutoff points, measuring numbers can be much more reliably compared between displays. A GtG 2ms display was obviously faster than a GtG 33ms display, as long as the GtG measuring technique was standardized -- even if the real GtG(100%) was a bit different. The 10% and 90% points are somewhat arbitrary and human-selected, but there were good reasons for the existence of the industry standardized cutoff points.

But that can also create a divergence between what is human-seen and what is measured. And manufacturers end up being blamed for inaccurate specs. But there's actually a method, reasons, and industry standard for GtG measurements.

Now, GtG measurements well below a refresh cycle, tend to be hidden in the persistence (MPRT) motion blur. A 1ms GtG display can have 16.7ms MPRT.

GtG is the pixel response time while MPRT more represents the pixel visibility time. MPRT is more accurate in measuring display motion blur.

MPRT is measured differently, but has some similar "cutoff thresholds" (10% and 90%), as specified in this paper.

Also, recently, Blur Busters has written a new article: GtG versus MPRT -- the two different pixel response benchmarks. They are both standardized.

Hopefully this demystifies things.

Cheers,

---

Last edited:

TRENDING THREADS

-

News US sanctions transform China into legacy chip production juggernaut — production jumped 40% in Q1 2024

- Started by Admin

- Replies: 35

-

-

-

Question Downloads NOT working over WiFi but DO work with mobile hotspot?

- Started by louisfawk

- Replies: 8

-

Question New pc build r9 7900x3d rtx 4080 super no post only ram rgb turns on

- Started by Harvey Durward

- Replies: 4

-

Question Crashing PC during demanding games and tests (Kernel-Power 41 error)

- Started by Kubaja123

- Replies: 3

Tom's Hardware is part of Future plc, an international media group and leading digital publisher. Visit our corporate site.

© Future Publishing Limited Quay House, The Ambury, Bath BA1 1UA. All rights reserved. England and Wales company registration number 2008885.