AMD's looming Ryzen 7 5800X3D processor has popped up in the Geekbench 5 database.

Ryzen 7 5800X3D Beats Ryzen 7 5800X By 9% In Geekbench 5 : Read more

Ryzen 7 5800X3D Beats Ryzen 7 5800X By 9% In Geekbench 5 : Read more

My guess would be the ability of the L3 cache to hold more data that all the cores need. With just single core only the current jobs data is stored on the cache, when it’s done with that job the cache is refreshed with the new single core job and so on and so on. Since the multi core test will run a different job on each core simultaneously, having more L3 allows more of the individual data each core needs for its respective job to be stored in cache which means less cache misses being sent to RAM access which is about 6-7x higher latency vs cache access. TLDR, a big enough L3 allows all cores to be fed data at low latency on the 5800x3d vs resorting to high latency ram when the 5800x’s L3 cache is full.So why does the cache help in the multicore test but not the single core one?

We can look back at AnandTech's deep dive on Zen 3 to get answers for this.So why does the cache help in the multicore test but not the single core one?

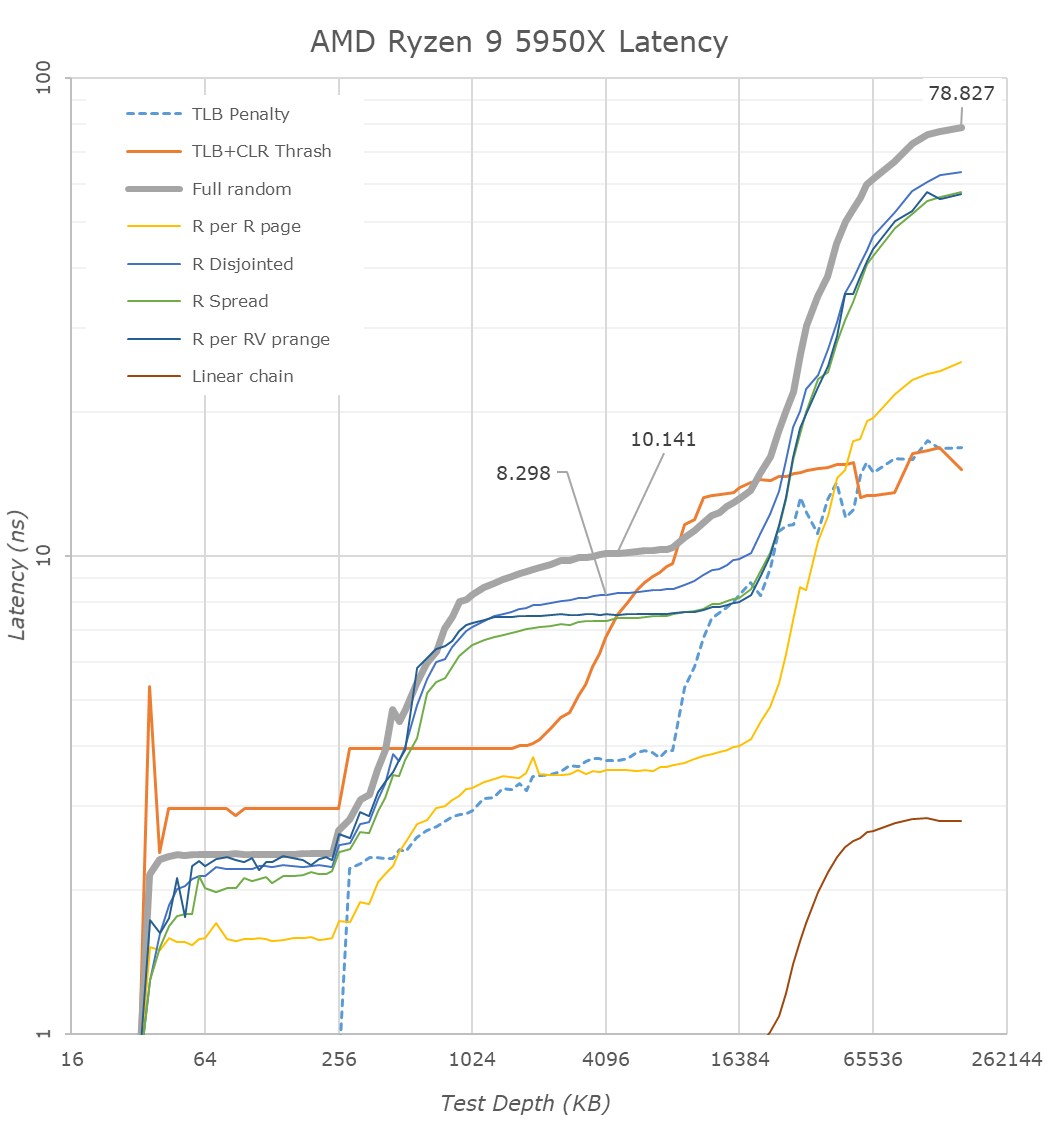

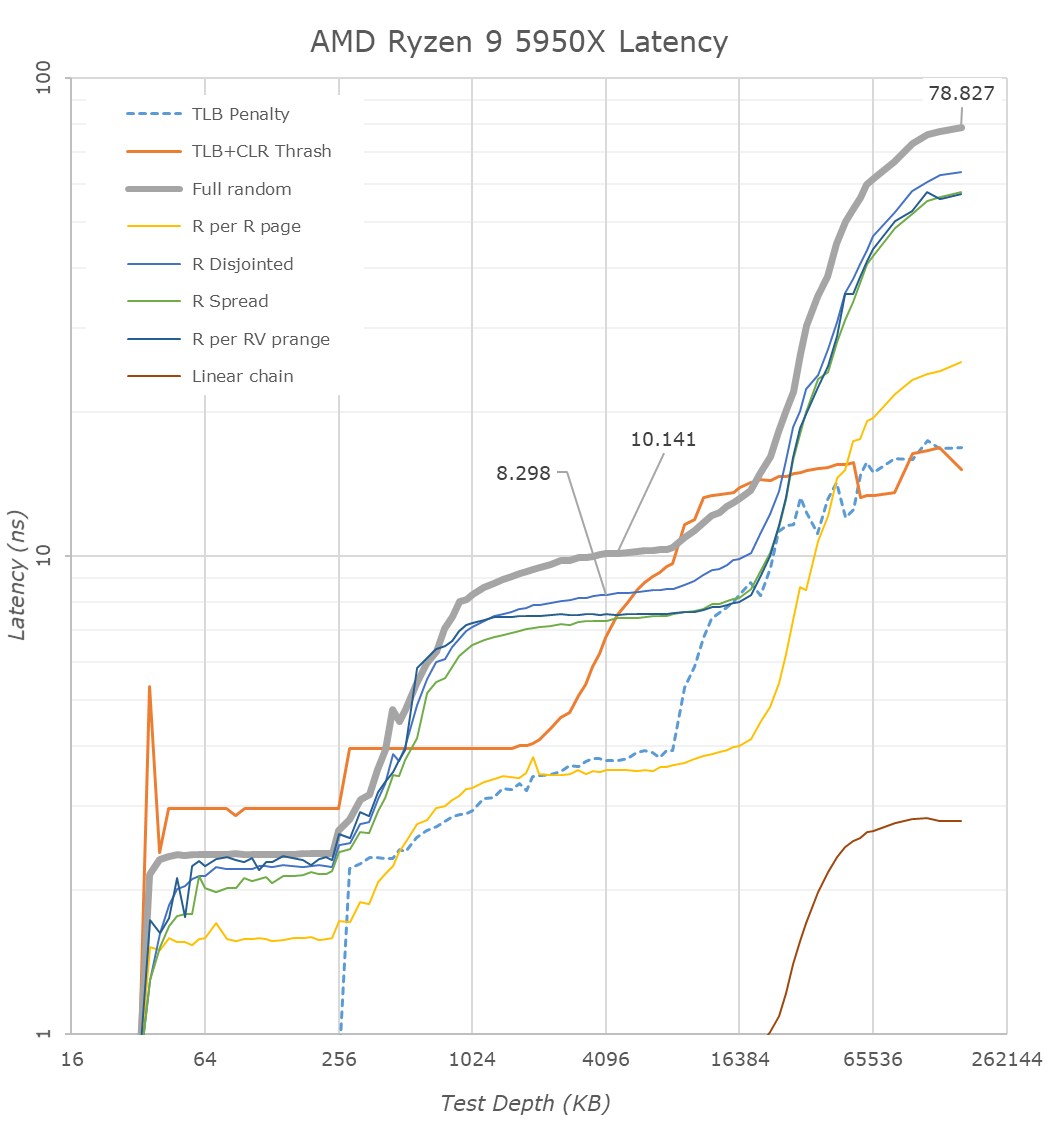

Yes you make valid points and you are certainly learned in the sci-art of micro architecture, however I would like to correct a few things you bring up that might help you understand why bigger L3 is helpful in certain cpu workloads. First, the ram latencies you are referencing (less than 90ns) are the best case scenario IE data is requested on an open active row and column which is prepped to be read. This is almost never the case. Aida 64 and other latency and bandwidth benchmarks always give the best case latency for cache and ram so you must take each to be equivalent in how their respective latencies react to different data locations IE my ryzen 9 5950x L3 has a 10.4ns latency according to Aida 64 but as the graph you provided shows worst case is closer to 90ns. The same goes for RAM, my cas 14 3800mhz ram has an Aida 64 latency of 60.2ns but worst case is closer to 1 us or more due to row precharge, access, read, etc. duties to find and transfer the data + a trfc refresh cycle that can halt the data fetch in its tracks and cause the fetch to restart.We can look back at AnandTech's deep dive on Zen 3 to get answers for this.

There's also another issue: you can only have so much cache before you'll spend too much time looking through cache than it would've taken to look it up from RAM.

- It's important to note that the L3 cache is a victim cache of L2. Basically, when L2 gets full, the oldest thing gets kicked out into L3. Also as a result, L3 cannot prefetch data.

- Zen 3's increased cache came at a cost of higher latency and lower bandwidth per core. It was meant more to service multi-core workloads, since this allows every core in the CCX to have the same latency when accessing each other's data

- They made the observation that the L2 TLB is only 2K entries long, with each entry covering 4KiB. This means per core, the TLB can only cover 8MiB of L3 cache. So a single core has issues even without the extra cache from V-Cache

For example if we look at this chart from AnandTech's article:

(note the y-axis is logarithmic)

If we assume the latency is linear after 16MiB, which appears to be 15ns in the worst case, and L3 cache gets full, you're now looking at 90ns just to look through the whole thing. It would've been faster to go look at RAM at that point.

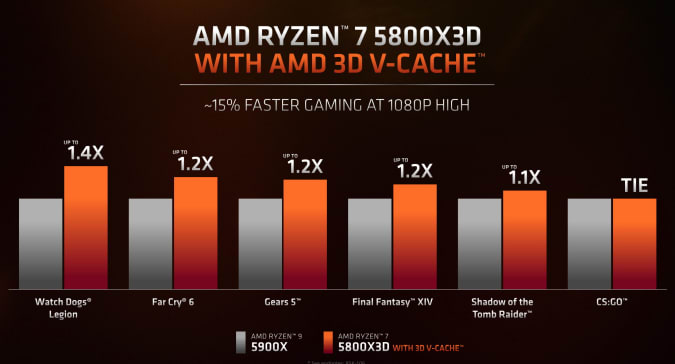

I don't think it is a stop gap, but more of a prototype test. If this works well in real world use and with consumers, the tech can be used in future processors, with lowered prices and better performance. If it doesn't, this will be the first and last 3D V-Cache CPU.Actually the results are not surprising. In fact, I feel AMD should not even bother about releasing a stop gap solution here because in my opinion, this offers too little performance uplift, and too much of a price increase for most users. While it is true that Intel have regained their performance crown, but the most important thing is price. Zen 3 may be older, but if the price is right, it is still a viable option. But for USD 449, which is basically the launch price of the R7 5800X, the performance uplift is uninteresting/ unexciting, so I doubt they will see any improvement in sales, nor will they be able to retain the performance crown.

The problem I'm seeing is AMD hyped this up to be some sort of game changer and it'll bring it appreciable performance upgrades over the non V-cache CPUs. While I don't disagree they wanted to throw this tech out on something to see how it works, I think they should've released it with less fanfare.I don't think it is a stop gap, but more of a prototype test. If this works well in real world use and with consumers, the tech can be used in future processors, with lowered prices and better performance. If it doesn't, this will be the first and last 3D V-Cache CPU.

Graphics Command Centre.

The problem I'm seeing is AMD hyped this up to be some sort of game changer and it'll bring it appreciable performance upgrades over the non V-cache CPUs. While I don't disagree they wanted to throw this tech out on something to see how it works, I think they should've released it with less fanfare.

You don't sell product by timid marketing. Unfortunately, yes, lots of hype can create unreal expectations/excitement amongst consumers. One thing is for certain, AMD/Intel/Nvidia are going to show cherry-picked metrics in their marketing slides that paint the respective product in the best light.I think they should've released it with less fanfare.

The problem I have is AMD seems to be positing the 5800X3D has a gaming CPU that will take back the performance crown (or if not, at least stand on the podium). Most games, especially with esports titles, can't take full advantage of an 8C/16T processor yet. So while sure, the 5800X3D may be able to beat out a 5800X in multicore loads, that won't translate much to games. And depending on the game or API used, single core performance is still going to be the deciding factor in how many frames per second you get. And then we have the apparent snafu that AMD teased a 5900/5950X with 3D V-Cache, but decided to release only a 5800X.You don't sell product by timid marketing. Unfortunately, yes, lots of hype can create unreal expectations/excitement amongst consumers. One thing is for certain, AMD/Intel/Nvidia are going to show cherry-picked metrics in their marketing slides that paint the respective product in the best light.

Also, its a fairly novel design/fab achievement. They deserve to be proud.

As discussed above, Vcache can't/shouldn't be a silver bullet to all aspects of CPU performance. Like we see on the single-core geekbench score, there will be scenarios where little/no gains are realized. That just means that the higher level caches are sufficiently proportioned/designed to handle said workloads. Don't get me wrong, you still have to get benchmark "wins", but the important part is realizing what metrics are most indicative of "real world" workloads for some/most users, and what metrics are more niche.

We'll see in the reviews on launch day. Until then, we can only trust AMD:sure, the 5800X3D may be able to beat out a 5800X in multicore loads, that won't translate much to games. And depending on the game or API used, single core performance is still going to be the deciding factor in how many frames per second you get.

As much as we can trust Apple to claim that their M1 Ultra GPU could match an RTX 3090.We'll see in the reviews on launch day. Until then, we can only trust AMD:

This is where I am at. Coming from the 3700X the jump to a 5800X3D if it averages a 10% increase over the 5800X/5900X that would be a worthwhile improvement for me running a 240Hz monitor and no need to buy a new motherboard. It all hangs on the reviews.It may not be everything it was said to be, but up to 10% more performance at lower clock speeds is pretty great.

This is where I am at. Coming from the 3700X the jump to a 5800X3D if it averages a 10% increase over the 5800X/5900X that would be a worthwhile improvement for me running a 240Hz monitor and no need to buy a new motherboard. It all hangs on the reviews.

It's really only 10% above the 5800x though.

$100 in the grand scheme of my rig isn’t that much, I’ve spent more on fans and lighting so a 10% lift in fps for $100 is quite appealing. If it’s genuinely as good or better than any other cpu for gaming it will give me the best performance from my AM4 motherboard, a 3700X is showing its limitation paired with a 240Hz monitor. I can then put off my next big upgrade for longer until DDR5 has matured and performance gains improved with cost dropped.It's really only 10% above the 5800x though. I'm not sure why you would drop an extra $100 over the 5800x for something that gaming wise is equal, but inferior multithreading wise (5800X3D vs 5900x). Drop the $100 on the 5900x and you've got a better CPU than the 5800X3D.

it's all on perspective. if this was a new intel cpu that was 10% over the last gen AMD chip, the headline would be "INTEL RETAKES THE CROWN WITH A MASSIVE BEATDOWN OF AMD!!!"

or even if it was a 10% gain from one intel gen to the next "refresh". it would still be a great fanfare as a huge increase and "just go buy it" recommendation.......