The difference is, the old power supplies worked with Pure AC, and didn't deal with PWM power, and the difference is massive. Pushing down Signals that are continuous on to a line rather than simulated PWM means that though the average power are the same, the peaks of PWM are considerably higher, and this has a cost. Shorting power in the supply so that different connectors have the same power is nothing new, and this is not the argument, what is the argument is that these leads and connectors are rated for an average power, and that works for RMS controlled sine waves, but PWM are basically forced squarewaves that supply a peak value well above the average, and not in a nice shape. meaning that the short Bursts (like Digital) actually draw high peaks of power but for short controlled bursts. Fine when its based on signals in the core of a cpu, when the coltages are kept to 3.3v for signaling, but power draw becomes an issue.

Am I talking to someone that is even understanding anything about signalling and power at Frequency. All I am getting is answers that just say "this has been used before!" But its not the same thing or for the same purpose.

Intel are trying to push on people its PWM power systems, and trying to get people to install them on the motherboard. Essentially to shrink down the size of the power supply system, and avoid using large capacitors to remove wasted ripple. But these compromises always come at a price.

The whole point in having multiphase signaling on the Motherboards, was to stop the use of overdrive power used for digital signaling and create Square waves using combinations of multiple sinewaves added together. This ups efficiency, and causes less stress on high power components. Intels tech, does the opposite, and makes it harder, but continually having to change the PWM frequency to match demand. So rather than produce a nice sinewave, polyphase system, which they have perfected on the motherboards, intel wishes to save space, and bend the principles of electronics to use Switching power signals to provide average power.

Sine wave system created individual sinewaves per line and combined them before use. Intels tech just forces the same PWM signals through all lines.Its becomes an issue when the adapter is designed and used on average power, when given that PWM works as a max of 50% duty cycle, has to then provide more voltage at its high state, to average to the designed voltage it needs to feed. So 5v systems, need a 10v supply Minimum at 50% duty Meaning peak values are twice the rated by design...

So what happens when power draw is Maxed, the voltage is 10v for 50% of the time, and though that averages at 5v, it puts possibly more voltage on the devices that are rated for far lower. Current Draw is on demand, so when this increases, peaks of power draw are 0.6x higher that the x Root 2 that it was before.

So different lines all being shorted, means all devices that are shorted to the same connector, or different parts of the system all connected together are subjected to these same spikes of voltage.

My Guess is that the Power adapter meltdown is with high sustained use. If it was simply not connecting the card up correctly, then this would void any warrantee and liability for Nvidia, and they could refuse to replace anything. Or on the other hand, the uses tried to overclock their cards and pushed the power, which is up to its limits, over the threshold.

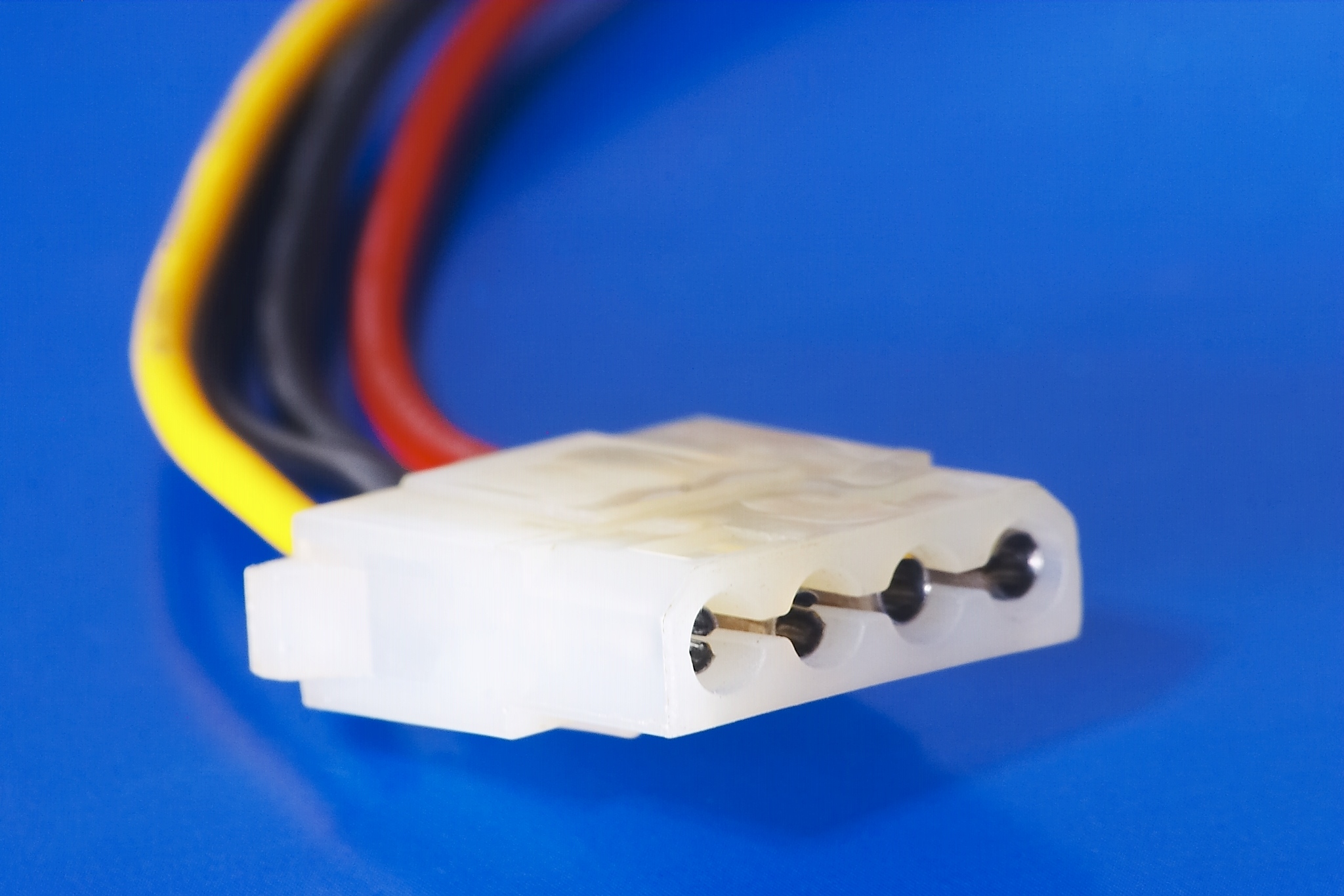

It doesn't matter if the Transistors on the board can take the voltage, what matters is that any system is as strong as its weakest rated part, and this is the connector system.