Maybe someone can point me to a good read, but I always had this question in my head - but why is multi GPU still up to the developer to implement and not something that just works base off the the card's task manager to assign work out? This way simply supporting "DX13" granted you a boost in hardware scaling (even if it wasn't 100% scaling but a more modest 80% scaling).

I guess I just don't understand how the current cards work isn't expanding just over a "NV-Link" and process much like if it was just a bigger card instead?

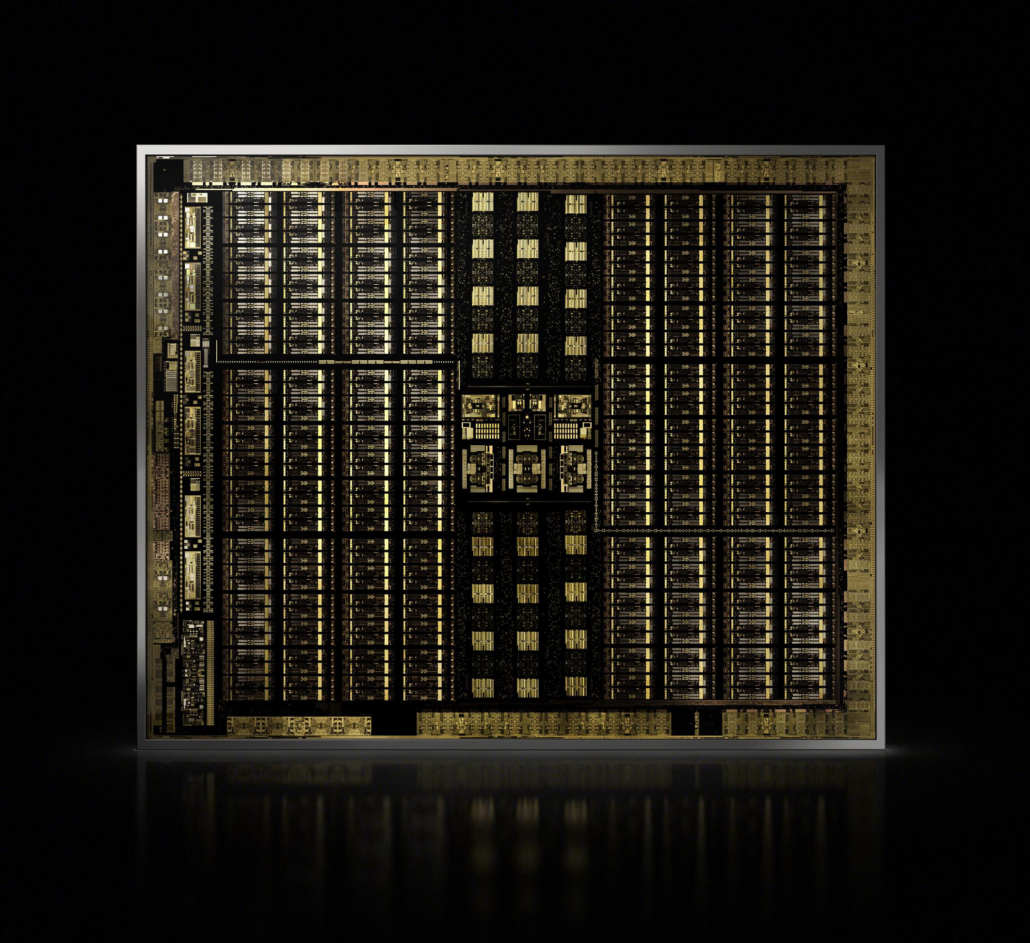

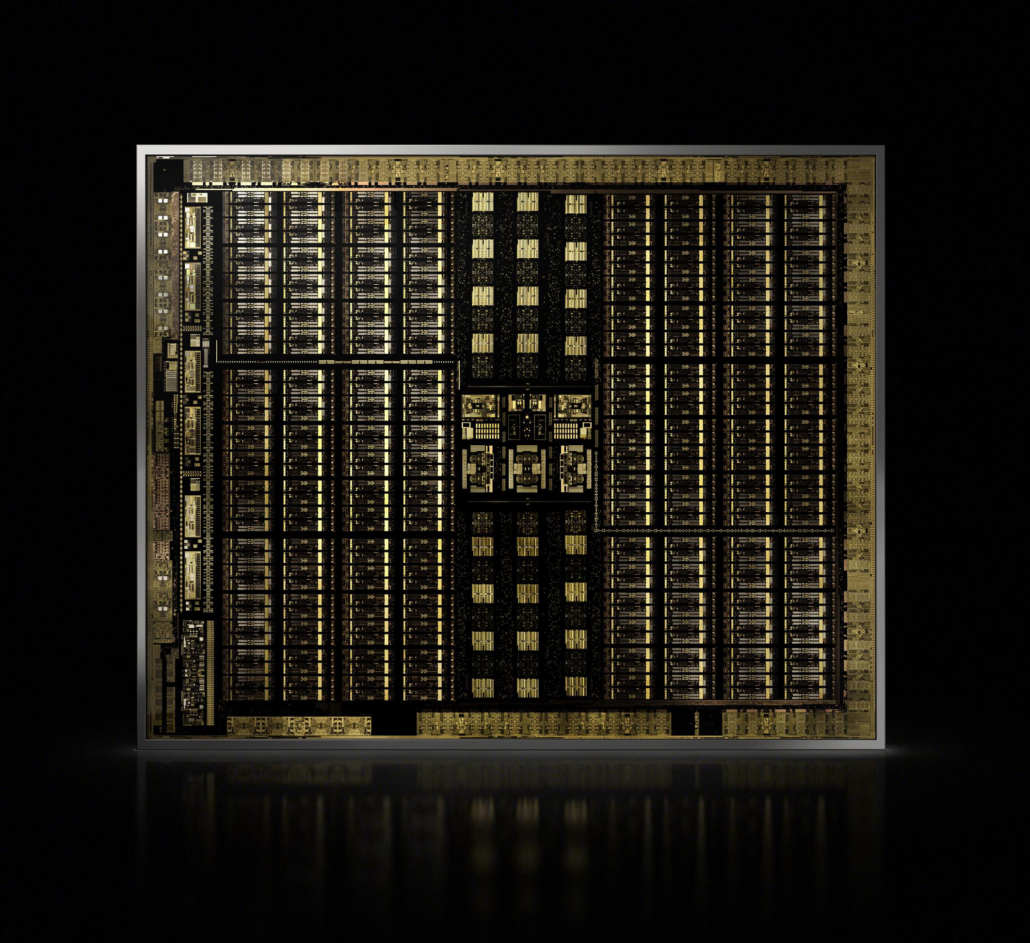

From this image we can see everything is already split into different section. Each section doing a type of job for that core type. There is a master task engine in there that split each function call and then assigns it to that core to do the work. If we was to expand on this - why don't we send that same information directly to another care to do async functions as well splitting the work on two cards. "Well what if card 2 takes longer" - well it actually wouldn't matter because you be waiting either way for xx function to complete. So unless the card isn't about the same performance level as card 1 - you should get about the same amount or more work done that card 1 is doing.

I guess what I am saying is just create a interconnect that connects memory and the different cores such as they appear as one unit instead. My understand AMD might be doing something like this with their CPUs already - so why can't we do this at a "larger" scale between two GPUs instead?

Or is there a underlyn understanding I am not understanding that a developer has to make happen that code/hardware can't just understand to do on its own?

I guess I just don't understand how the current cards work isn't expanding just over a "NV-Link" and process much like if it was just a bigger card instead?

From this image we can see everything is already split into different section. Each section doing a type of job for that core type. There is a master task engine in there that split each function call and then assigns it to that core to do the work. If we was to expand on this - why don't we send that same information directly to another care to do async functions as well splitting the work on two cards. "Well what if card 2 takes longer" - well it actually wouldn't matter because you be waiting either way for xx function to complete. So unless the card isn't about the same performance level as card 1 - you should get about the same amount or more work done that card 1 is doing.

I guess what I am saying is just create a interconnect that connects memory and the different cores such as they appear as one unit instead. My understand AMD might be doing something like this with their CPUs already - so why can't we do this at a "larger" scale between two GPUs instead?

Or is there a underlyn understanding I am not understanding that a developer has to make happen that code/hardware can't just understand to do on its own?