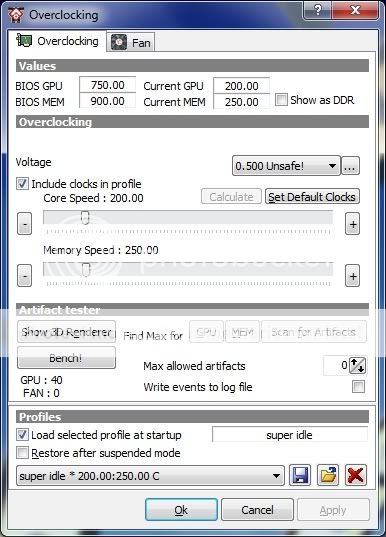

[citation][nom]joeman42[/nom]What is really needed is a "continuous" OC utility that can detect artifacts during actual use and adjust accordingly. I've noticed that my max OC tends to change each time I test and depending on the tool I test with (e.g., atitool, gputool, rivatuner, and my favorite, atitraytool). Some games, l4d in particular, crash at the slightest error. Others such as COD and Deadspace are somewhat tolerant. Games like Far Cry 2 and Fear 2 don't seem to care at all. It would be nice if the utility could take this into account.As for the tools themselves, Atitraytool has far and away the best fan speed adjuster, the dual ladder Temp/Speed is a model of simplicity. Plus, it can automatically sense a game and auto OC just for the duration. Nothing like this exists on the NV side (you must explicitly specify each exe). Unfortunately, I am on a NVidia card now and Rivatuner is pretty much the only game in town for serious tweaking. IT IS A DESIGN DISASTER! random design with no discernable structure. A help file which consist solely of the author bragging about his creation, without explanation as to where each feature is implemented or how to use it. And no, scattered tooltips is not an acceptable alternative. It took forever to figure out that I needed to create a fan profile and then a macro and then create a rules to fire the macro which contains the fan profile just to set one(!) fan speed/temp point (and repeat as needed). Sorry for the rant, but I really hate Rivatuner![/citation]

Oh hello. That's what OCCT is for.