Nvidia lifts the veil on the first GeForce RTX 3070 performance figures.

Nvidia's GeForce RTX 3070 Beats 2080 Ti in First Performance Results : Read more

Nvidia's GeForce RTX 3070 Beats 2080 Ti in First Performance Results : Read more

This is... pretty promising. Bear in mind that this is from Nvidia, as TH pointed out. On the other hand, games haven't been optimized for it yet, drivers, etc.

I wonder why the 2080Ti was slightly faster in Control?

I wonder why the 2080Ti was slightly faster in Control?

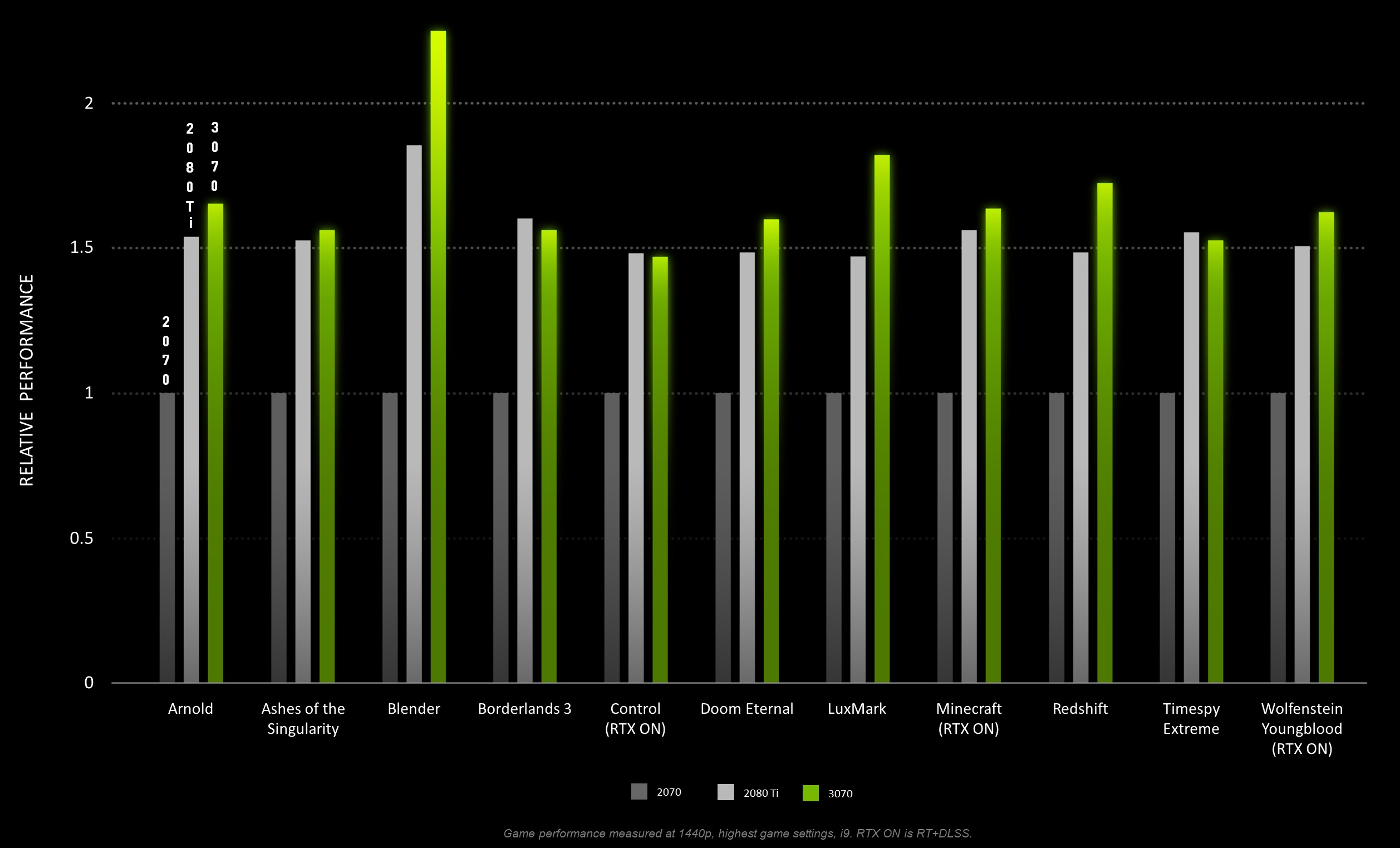

Keep in mind, that's not an average for gaming performance, but for a mix of games, rendering software and the TimeSpy benchmark, and the rendering software is clearly throwing off the results. If we ignore the rendering software, that works out to only about a 3% difference. And of course, half of those games have RTX and DLSS upscaling active, and we know from reviews of the other Ampere cards that Doom Eternal is a major outlier not indicative of typical performance in other games. So, it looks like, on average, the 3070 will likely tend to be slightly slower than a 2080 Ti in most of today's games. With future games heavily utilizing RT it might manage to be slightly faster, but the Control results indicate that's not necessarily a given for AAA titles heavily utilizing RT effects.On average, the RTX 3070 was 8% faster than the 2080 Ti...

Actually, they were in fact using DLSS upscaling for all of the RT games listed. According to the fine print at the bottom of the chart...Yeah I'm not sure I found it very strange. Especially with RTX enabled, it should be faster. I suspect they were not using DLSS and as a result, the 8GB frame buffer was being maxed out because ray tracing will use more vram vs regular lighting techniques.

However, DLSS should significantly reduce vram consumption, since you're reducing the render resolution by more than 2x, so this should not be a problem at all if you use DLSS. Running Control on my RTX 2060 Super with everything maxed out at 3440x1440 with DLSS at 720P (performance mode) i couldn't get my vram usage above 6GB.

Game performance measured at 1440p, highest game settings, i9. RTX ON is RT+DLSS.

Keep in mind, that's not an average for gaming performance, but for a mix of games, rendering software and the TimeSpy benchmark, and the rendering software is clearly throwing off the results. If we ignore the rendering software, that works out to only about a 3% difference. And of course, half of those games have RTX and DLSS upscaling active, and we know from reviews of the other Ampere cards that Doom Eternal is a major outlier not indicative of typical performance in other games. So, it looks like, on average, the 3070 will likely tend to be slightly slower than a 2080 Ti in most of today's games. With future games heavily utilizing RT it might manage to be slightly faster, but the Control results indicate that's not necessarily a given for AAA titles heavily utilizing RT effects.

Actually, they were in fact using DLSS upscaling for all of the RT games listed. According to the fine print at the bottom of the chart...

I’ve literally looked over dozens of reviews on the 3080 and 3090. There’s a reason Nvidia had to sneak in SEVERAL rendering benchmarks in their performance graphs because the rendering performance of Ampere is far higher than Turing. They didn’t do this with their 3080 benches. Look up the techgage 3080 review to see the huge difference between rendering performance between the cars and you’ll see why Nvidia pumped this chart full of them.Ah thanks for that.

Yeah I agree HyperMatrix, these results are purely from nvidia, and like the 3080 and 3090, i highly doubt the 2080 Ti will be slower than the 3070 in almost everything. The best-case scenario we're probably going to get is both cards matching performance equally (which I'm fine with, that still makes the 3070 a good card for $500). That explains the Control graph too. I don't really buy that the 2080 Ti won purely on tensor cores, because the 3070 comes with 3rd generation tensor cores which are faster than the 2nd gen ones in the 2080 Ti. It's probably a mix of other stuff as well.

CUDA cores don't come anywhere close to scaling linearly. 3090 has 20% more CUDA cores than 3080, and quite often is about 10% faster than the 3080. If we use only 50% scaling for CUDA cores, the 3070 would pretty much be in a dead heat with the 2080ti. On average, in non-ray tracing titles, the 3070 will probably be single digits behind the 2080ti. Which is still a great showing for a $500 card. In 2 years, when Nvidia is getting ready to release Hopper, I wouldn't be surprised if driver optimizations and future titles making better use of the architecture has the 3070 8% ahead of the 2080ti.Guys, please don’t buy the marketing on this. If you noticed in the chart, Nvidia stuck several rendering applications in there because Ampere rt cores provide a massive boost over Turing for that function. And the very few games they showed are a best case scenario for the 3070.

On average in gaming, assuming no VRAM limitations come into play, the 3070 will be 15-25% SLOWER than the 2080Ti. Think of it this way:

So if, for example, the 2080Ti gets 100fps, the 3080 gets 124fps. And since the performance of the 3080 is AT LEAST 50% higher than the 3070 (due to having 50% more CUDA cores and 60% more bandwidth), that means we can extrapolate the performance of the 3070 to be on average no higher than 124/1.5 = 82 FPS. It’ll likely be even worse than that because the ratio of memory bandwidth to CUDA cores is even worse on the 3070. But we’ll stick to the best case scenario. So the 3070 will be 18% slower than the 2080Ti. Or...the 2080Ti will be 21% faster than the 3070.

- Nvidia claims 3070 is 8% faster than 2080Ti.

- But we know the 3080 24% faster than the 2080Ti in actual gaming (average of 15 popular games, techpowerup)

- We know that The 3080 has 50% more cores than the 3070

- We also know the 3080 has 60% more memory bandwidth than the 3070

The numbers will be a bit higher for the 3070 in RT bound situations and lower in VRAM limited situations. But please don’t buy into this marketing garbage.

CUDA cores don't come anywhere close to scaling linearly. 3090 has 20% more CUDA cores than 3080, and quite often is about 10% faster than the 3080. If we use only 50% scaling for CUDA cores, the 3070 would pretty much be in a dead heat with the 2080ti. On average, in non-ray tracing titles, the 3070 will probably be single digits behind the 2080ti. Which is still a great showing for a $500 card. In 2 years, when Nvidia is getting ready to release Hopper, I wouldn't be surprised if driver optimizations and future titles making better use of the architecture has the 3070 8% ahead of the 2080ti.

what happened to your formatting and being able to click on a picture to enlarge it? I'm on a 15" laptop and I can't make out any of the text in that picture, even if I try blowing it up in Chrome or Firefox.

what happened to your formatting and being able to click on a picture to enlarge it? I'm on a 15" laptop and I can't make out any of the text in that picture, even if I try blowing it up in Chrome or Firefox.

Just wondering, roughly what difference is there on your 2060 Super at Ultrawide w/DLSS on performance vs. quality?Yeah I'm not sure I found it very strange. Especially with RTX enabled, it should be faster. I suspect they were not using DLSS and as a result, the 8GB frame buffer was being maxed out because ray tracing will use more vram vs regular lighting techniques.

However, DLSS should significantly reduce vram consumption, since you're reducing the render resolution by more than 2x, so this should not be a problem at all if you use DLSS. Running Control on my RTX 2060 Super with everything maxed out at 3440x1440 with DLSS at 720P (performance mode) i couldn't get my vram usage above 6GB.

The only exceptions are Control and Timespy Extreme, where the 2080 Ti is just a hair quicker.

.

.Who cares, there won't actually be any of these cards available at launch anyway. They only did the 30 series launch when they did so they could make sure they beat AMD projected dates. Typical Nvidia BS

Does anyone have any ideas why Blender (and Redshift too I suppose) shows a vast difference between the 3070 and 2080 Ti compared to everything else?