@upgrade_1977

I define the 'real world' as the 'real user experience'. If my computer can max my monitors capabilities, any theoretical overhead beyond that is lost on me, the user, because all I can see is the limit of the weakest link in my hardware - the monitor. 120Hz monitors 'becoming' the standard is not the same as 'being' the stanard. My argument is that you picked out 2 benchies out of many many others to specifically make BD look far worse then it actually is (not to say that its great). If I were to cherry pick data, I could put a few graphs where each of the dicussed processors has the exact same frame rates. So we are discussing different things. You are talking about some FPS number that a computer can generate. Great. I'm talking about the end user experience. It's great that there are CPUs out there that can push 120 or more FPS, but I am certainy not going to pay more money to see a higher number on a benchmark. My question is - is my game running at max graphics and settings without noticable frame drops or stutters. If the answer is Yes (which it is for all AMD offerings with the exception of a few hardware/software combination scenarios) then any discussion of "better" or "faster" is pretty moot - my experience remains the same - so why would I pay more for the same experience?

Oh, and lemme cherry pick some graphs to make BD look good. Heres the FX-8150 vs the 2600K

Lets see - HAWX:

http://www.tweaktown.com/reviews/4350/amd_fx_8150_bulldozer_gaming_performance_analysis/index5.html

8FPS difference at highest resolution. Lowest FPS for AMD is 141. Will never see a difference on any monitor.

MAFIA 2

http://www.tweaktown.com/reviews/4350/amd_fx_8150_bulldozer_gaming_performance_analysis/index6.html

1 FPS difference at highest resolution. Lowest FPS for AMD is 64 - may see a difference on the best of monitors

Lost Plane 2

http://www.tweaktown.com/reviews/4350/amd_fx_8150_bulldozer_gaming_performance_analysis/index7.html

1 FPS difference at highest resolution. 2FPS difference at lowest. Lowest AMD FPS is 51 - vs 52 on the 2600K. Will never see a difference on any monitor.

AVP

http://www.tweaktown.com/reviews/4350/amd_fx_8150_bulldozer_gaming_performance_analysis/index8.html

1 FPS difference at highest resolution. Identical at all other resolutions. Will never see a difference on any monitor.

Metro 2033

http://www.tweaktown.com/reviews/4350/amd_fx_8150_bulldozer_gaming_performance_analysis/index9.html

1 FPS difference at highest, 4 FPS difference at lowest resolutions. Will never see a difference on any monitor.

Dirt 3

http://www.tweaktown.com/reviews/4350/amd_fx_8150_bulldozer_gaming_performance_analysis/index10.html

Same as above

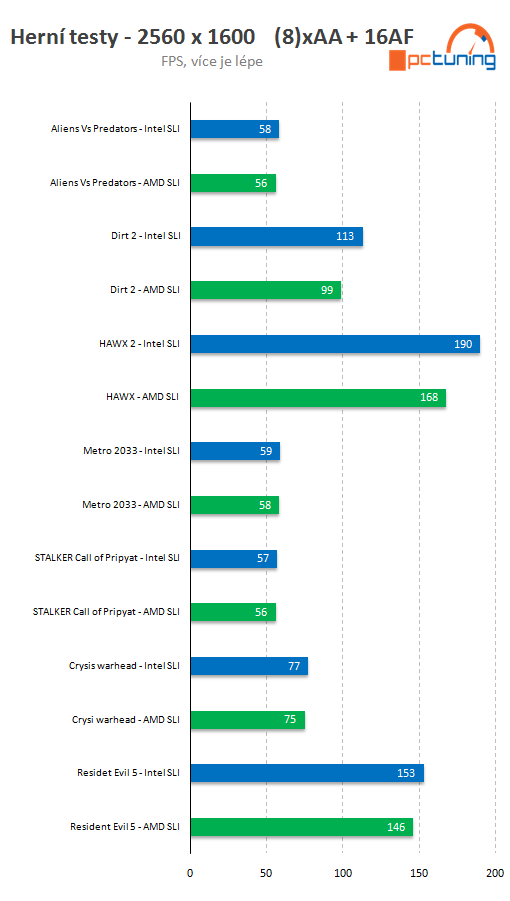

And here's an engeneering sample pitted against the $1000 monster from Intel, all played at highest settings at max resolution (2560x1600)

http://wccftech.com/amd-bulldozer-4ghz-es-pitted-intel-core-i7990x-gaming-benchmarks/

A few FPS here and there, with the only real difference being in HAWX - that being 168 FPS vs 190 for Intel. A difference you will never see on any monitor in existance.

My point stands. In the real world, theoretical FPS means absolutely nothing. GPU >>> CPU. Save your money, buy the previous generation AMD for $150 (or less) and use the savings to buy a GTX 580 for FAAR more performance.

If however you only want the best of the best, then you should have gotten a Nahelem 990X a few years ago and you'd still have the fastest gaming CPU on the market with absolutely no need to upgrade. If you are like the rest of the world... well, the benchmarks speak for themselves. How much is 5% gain in gaming FPS worth to you, especially when your monitor can't display them?

Posting from work, so need this disclaimer:

"The views expressed here are mine and do not reflect the official opinion of my employer or the organization through which the Internet was accessed."