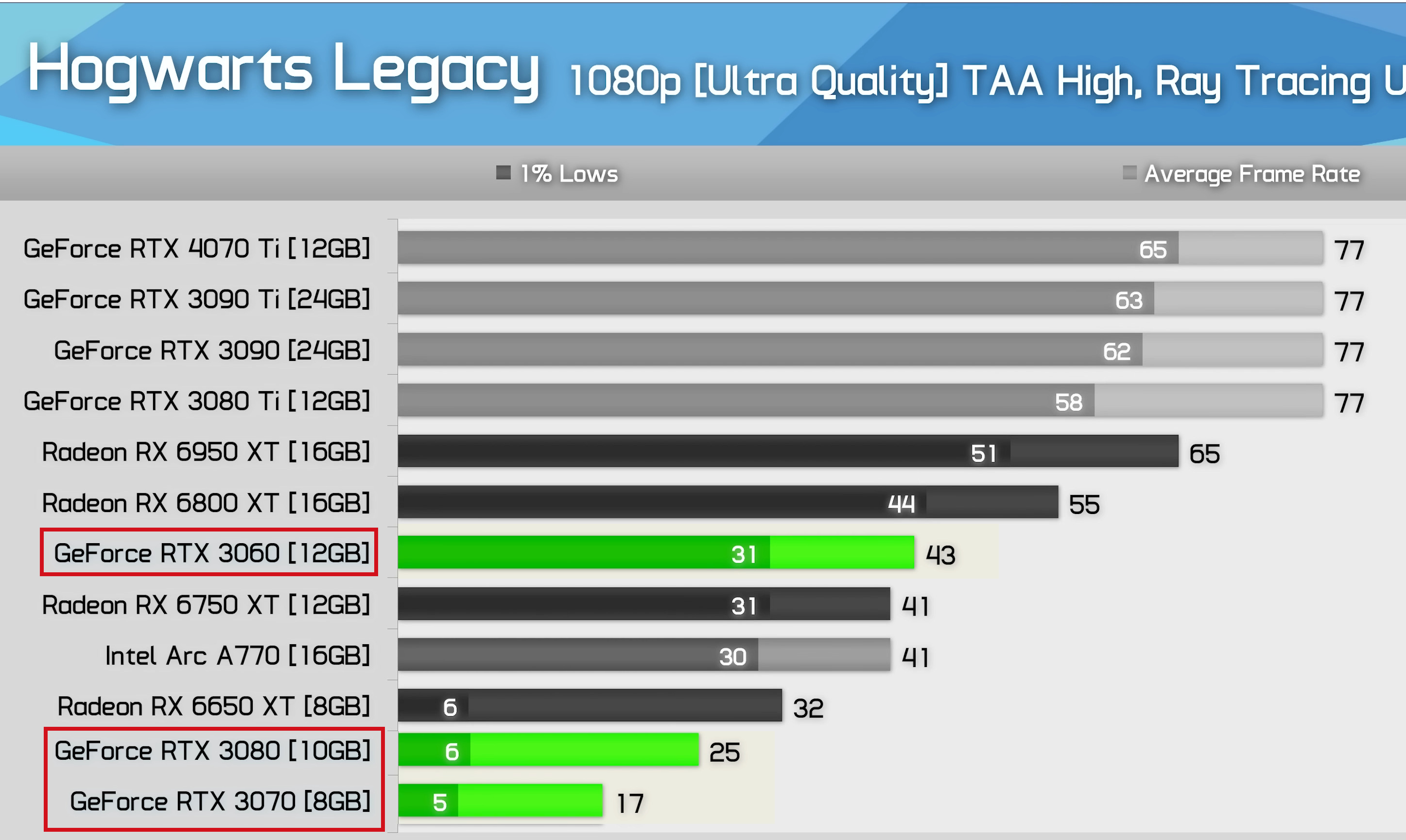

Easy fix, just don't play Hogwarts, lol.

The issue is not just that specific game, it is the trend going forward. With consoles having more VRAM, and the fact that the vast majority of games are built for consoles first before a PC version is made, then nearly all of the development time is spent trying to take advantage of the hardware the console has to offer. This means that the Harry Potter game results will become more common in the years to some.

When the RTX 3080 first came out, I and many others called them out on the small amount of VRAM, as this was an expected outcome if you want to use a video card for more than 2 or so years. You will encounter games where the GPU is powerful enough to max out, but the VRAM will bottleneck performance so much that you will be forced to use lower settings.

One issue with games that have to be scaled back from the console level graphics, is that those processes are not well optimized. The lower settings will often look really bad (far worse than many older games that ran far better).

The RTX 3000 series ended up being the shortest lived generation of video card.

PS, in ARK survival evolved, the RTX 3060 12GB performs better than the RTX 3070 8GB when you have a large base and many tamed dinos.

The RTX 3070 starts out much faster but as soon as the number of visual assets increases significantly, it runs into a VRAM bottleneck.