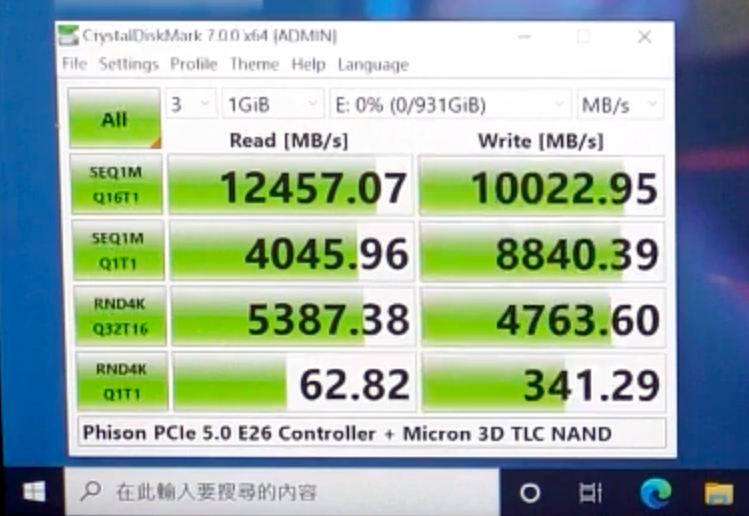

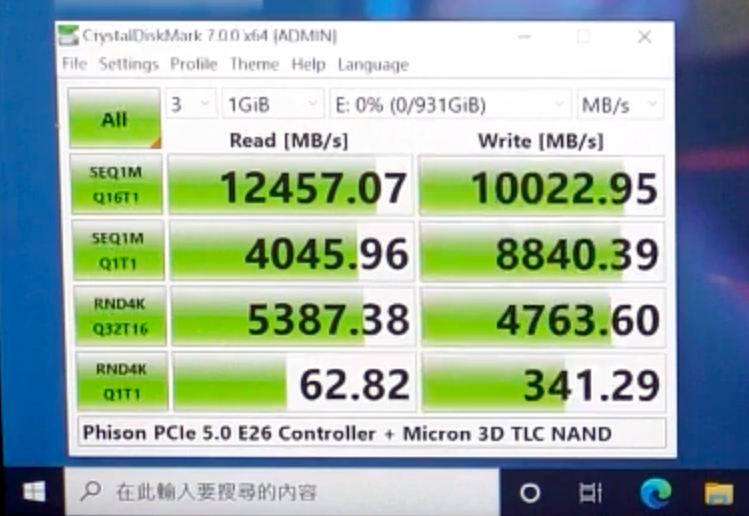

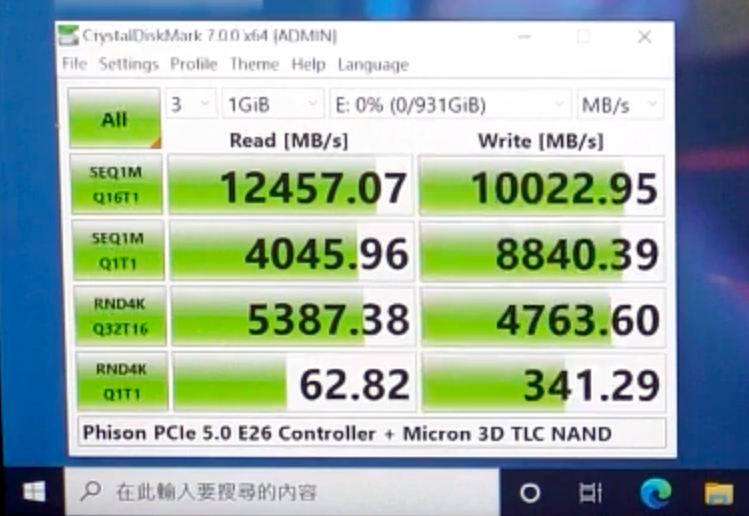

Phison showcases PS5026-E26 SSD with Micron's 3D TLC NAND memory.

Phison Demos M.2-2580 PCIe 5.0 x4 SSD: Up to 12GBps Reads : Read more

Phison Demos M.2-2580 PCIe 5.0 x4 SSD: Up to 12GBps Reads : Read more

I would argue that sequential q1t1 has an impact on many consumer applications as well. Also since this controller will target enterprise drives the high queue depth metrics are meaningful to that segment.For most of us, the bottom number impacts end user "experience" more than any other: RND4K Q1T1 speed. And in that measure it's really no different from a 3.0 SSD.

For most of us, the bottom number impacts end user "experience" more than any other: RND4K Q1T1 speed. And in that measure it's really no different from a 3.0 SSD.

Because realizing the benefits of massively increased bandwidth and reduced latency is going to require a major overhaul of how most software loads stuff. Typical software reads data from storage at the point of use, then waits for the read to return before processing the data until it reaches the next point where it needs to read more data, wait, then process that, rinse and repeat. To leverage NVMe's full capabilities, software needs to be rewritten to load everything it can concurrently ahead of processing so it doesn't get stalled waiting on reads.worthless, pcie3 to pcie4 nvme didn't do anything for most

In the case of games and likely some software with a boatload of plugins, there would be significant potential performance benefits to parallelizing loads to whatever extent dependencies will allow and prioritizing them accordingly to minimize bottlenecks. While IO to the raw block device may be serial, a lot of the processing that happens afterwards doesn't need to be and that is where the biggest bottleneck currently is.You will only experience the speed in sequential read/write situations. Serial nature of I/O means your won't benefit much from read/write of small files. This unfortunately is typical of end-user environment.

For most of us, the bottom number impacts end user "experience" more than any other: RND4K Q1T1 speed. And in that measure it's really no different from a 3.0 SSD.

In the case of games and likely some software with a boatload of plugins, there would be significant potential performance benefits to parallelizing loads to whatever extent dependencies will allow and prioritizing them accordingly to minimize bottlenecks. While IO to the raw block device may be serial, a lot of the processing that happens afterwards doesn't need to be and that is where the biggest bottleneck currently is.

With DirectStorage, processing can be done on the GPU for data intended to go there and doesn't necessarily have to go through system memory nor the CPU first. As for parallelizing loads, you need enough SSD IO bandwidth, low enough SSD latency and high enough SSD IOPS to feed the 12-32 CPU threads and GPU(s) that could be attempting to concurrently load and process stuff in software aiming for practically nonexistent load-screen times and asset pops.Those processing that happens afterwards is done by the CPU, not the SSD and the data needed will be stored in the RAM instead.

As for parallelizing loads, it can only be done by the software and CPU. SSD is only respoonsible for getting data that CPU requires.